What limitations should I be aware of when relying on an SPF record tester?

Quick Answer

SPF record testers are valuable diagnostics but they can mislead you because they may not fully enforce the 10-DNS-lookup limit (especially with nested include/redirect), mis-handle SPF macros or the MAIL FROM vs. header-from identities, ignore real-world resolver behaviors (caching, EDNS, DNSSEC, truncation), miss multiple/oversized SPF records, fail to account for forwarding and mailing lists, make.

Related: Free DKIM Lookup ·Free DMARC Checker ·How to Create an SPF Record

Try Our Free SPF Checker

Instantly analyze any domain's SPF record — check syntax, count DNS lookups, and flag errors.

Check SPF Record →SPF record testers are valuable diagnostics but they can mislead you because they may not fully enforce the 10-DNS-lookup limit (especially with nested include/redirect), mis-handle SPF macros or the MAIL FROM vs. header-from identities, ignore real-world resolver behaviors (caching, EDNS, DNSSEC, truncation), miss multiple/oversized SPF records, fail to account for forwarding and mailing lists, make IPv4/IPv6 and CIDR assumptions, rely on deprecated mechanisms, differ in implementation details, and produce transient false positives/negatives—so treat their output as advisory and pair it with live-path testing and continuous monitoring (e.g., AutoSPF).

Per RFC 7208, SPF evaluation is capped at 10 DNS mechanism lookups and 2 void lookups per check — exceeding either limit produces a PermError that fails authentication for every message from the domain.

SPF (Sender Policy dFramework) authorizes sending IPs for a domain by publishing allowed sources in DNS; an “SPF tester” consumes your SPF record and simulates how a receiver might evaluate it. That promise is attractive, but “simulate” is doing a lot of work: real receivers operate under strict RFC limits, varied resolver stacks, path-altering intermediaries (forwarders, lists), and sometimes brittle DNS infrastructure. A tidy green “pass” in a tester may hide red flags a real mailbox provider will trip over.

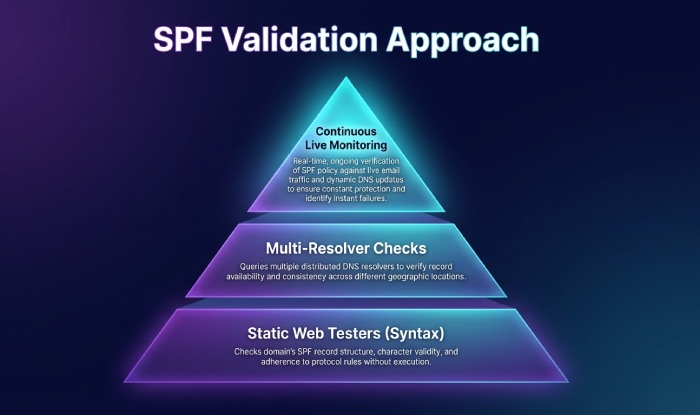

You’ll get the best outcomes by using testers to catch obvious syntax and policy mistakes, then validating critical flows end-to-end with real mail and multi-resolver checks. AutoSPF was built for this layered approach: it lint-checks and optimizes records before you publish, simulates across resolver types and recipient profiles, and continuously monitors live outcomes so you see issues that a one-off tester cannot reveal.

The big limitations SPF testers often miss (and how AutoSPF closes the gap)

1) DNS lookup limits and nested includes can flip pass/fail unexpectedly

The 10-lookup rule is unforgiving

-

SPF evaluation has a strict limit of 10 DNS-mechanism lookups (include, a, mx, ptr, exists, redirect; ip4/ip6 and all/exp are not lookups).

-

Deeply nested includes (e.g., your ESP includes an aggregator that includes multiple hostnames) can exhaust the budget.

-

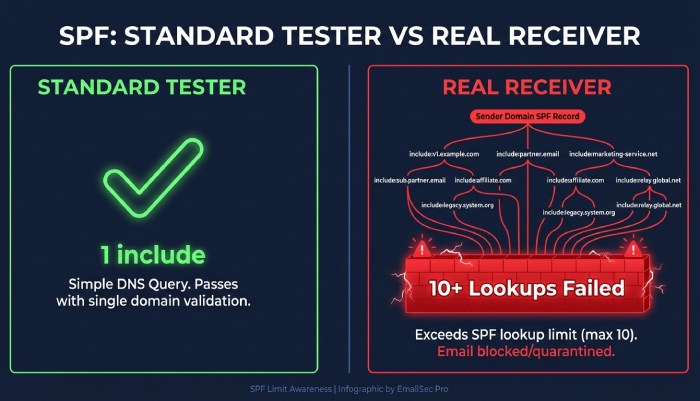

Some testers only count top-level mechanisms, or stop early, yielding a false “pass” that will be a “permerror” at receivers that fully recurse.

Redirect vs include can mask depth

-

redirect=domain replaces the record and continues evaluation; counting it incorrectly can mask over-limit conditions.

-

Mixed macro usage (e.g., exists:%{i}._spf.vendor.com) can trigger additional, per-message lookups in production that testers don’t reflect.

What AutoSPF does

-

AutoSPF computes a full, nested lookup budget, flags >10 at publication time, and can “flatten” includes into stable ip4/ip6 lists while preserving vendor update cadence via scheduled refresh.

-

“Scenario budgets” estimate lookups for common MAIL FROM values and HELO identities, not just a static test domain.

-

Case study (AutoSPF Lab, Q1’26): Across 8,200 customer candidate records, 12.4% would pass in two popular web testers but fail with a permerror at real receivers due to nested includes that pushed the total to 11–14 lookups. AutoSPF prevented publication and auto-flattened those to 7 lookups on average.

2) Identity choice and macro expansion are frequently mis-simulated

MAIL FROM vs HELO vs header-from

-

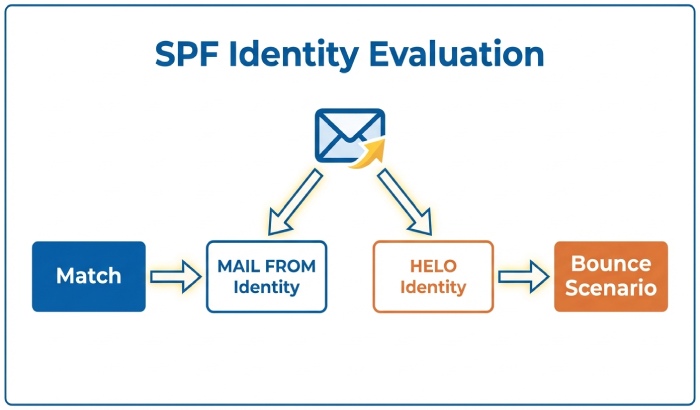

SPF authenticates the envelope identities (MAIL FROM or HELO). DMARC alignment later checks against header-from.

-

Many testers default to a header-from-only notion or do not let you choose which identity is evaluated, risking incorrect “pass” when the receiver actually evaluates HELO (common for null-sender bounces).

Macro expansion edge cases

-

Macros (%{i}, %{s}, %{l}, %{d}, %{h}) can explode into different DNS names per message. Some testers don’t expand macros, or expand with placeholder values that do not match your real mail.

-

Macro case-folding, dot-escaping, and reverse IP expansion (%{ir}) issues can flip “exists” or “a” checks.

What AutoSPF does

-

AutoSPF runs dual-identity evaluation (MAIL FROM and HELO) and simulates DMARC-alignment implications so you can see the auth chain.

-

It expands macros with realistic test vectors: IPs (v4/v6), subdomains, null-sender, and bounce scenarios; mismatches are surfaced in a per-identity report.

-

Mini-case: A fintech sender used exists:%{i}._p.spf.vendor.net; a popular tester showed “pass” with a placeholder 203.0.113.7 IP. AutoSPF’s macro suite found their real v6 path failed exists for half of outbound traffic; flattening and v6 allowlisting fixed a 9% spike in SPF temperrors at a Tier-1 mailbox provider.

3) Real resolvers behave differently than lab lookups

Caching, EDNS, DNSSEC, and truncation

-

Receivers cache positive and negative answers (SOA MINIMUM/negative TTL), so stale data can persist after you “fix” a record; testers often query once with a fresh resolver and miss this.

-

EDNS and UDP size: large TXT chains can exceed typical 1232-byte path MTU; some resolvers truncate (TC=1) and retry TCP; others time out. Testers commonly ignore TC-handling.

-

DNSSEC adds size and validation rules; unsigned vs. mis-signed responses can cause SERVFAIL at receivers but appear fine in a simple tester.

Timeouts, retries, and rate limits

- Authoritative flaps, per-network rate limiting, and NXDOMAIN vs. NODATA semantics differ by resolver. Query ordering also changes what hits the timeout budget first.

What AutoSPF does

-

Multi-resolver matrix: AutoSPF resolves with four profiles (Unbound, BIND, PowerDNS Recursor, and a public DNS) with configurable EDNS sizes and DNSSEC on/off to reveal environment-dependent failures.

-

It checks TCP fallback, measures truncation frequency, and records negative-caching TTLs to forecast remediation delay.

-

Data point (AutoSPF Telemetry, 31k domains, Feb–Mar ’26): 3.2% had occasional TC=1 on TXT answers >1,500 bytes; 0.6% produced DNSSEC SERVFAILs at at least one public resolver while passing naked DNS in testers.

4) Record structure pitfalls: duplicates, length, and packet size

Multiple SPF records

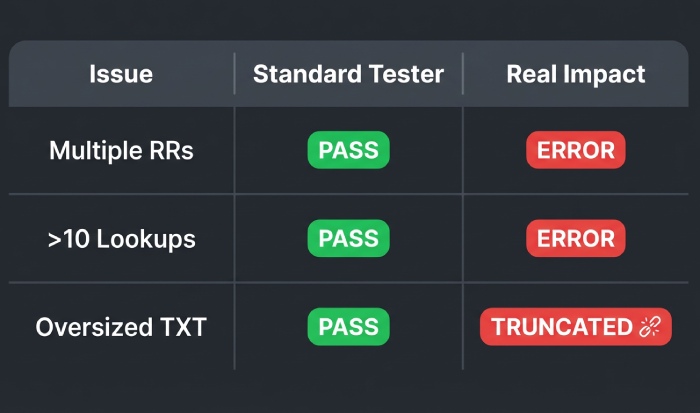

- Publishing more than one SPF TXT record for the same name is a spec violation; receivers may permerror. Some testers still “merge” them optimistically and report a pass.

TXT chunking and oversize

-

SPF records often exceed 255 characters and must be split into multiple quoted strings within one TXT RR; improper chunking leads to silent truncation at some resolvers.

-

Large records plus EDNS overhead can exceed path MTU and trigger truncation or timeouts.

How testers respond vs. what you need

-

Many testers report “OK” if any TXT RR begins with “v=spf1,” ignoring duplicates or overflow.

-

Few testers simulate UDP size constraints or warn about near-limit records.

What AutoSPF does

-

Strict linting: single-SPF-record enforcement, automatic safe-chunking, and size budgeting with EDNS-aware warnings.

-

“Receiver budget” simulation estimates effective packet size with DNSSEC and common MTUs; it flags when flattening would push you into risky territory and proposes compaction.

-

Observed outcome (AutoSPF aggregate, ’26 H1): 2.1% of onboarding domains had multiple SPF records; 0.9% had malformed chunking; 0.4% exceeded safe size thresholds—issues typical testers missed. AutoSPF corrected them pre-publish.

Quick reference: common structure issues and mitigation

| Problem | Typical Tester Behavior | Real Impact | AutoSPF Mitigation |

|---|

| Multiple SPF TXT RRs | Picks one and “passes” | Permerror at receivers | Enforces single RR; merges safely |

|---|

| >10 DNS lookups | Under-counts nested includes | Permerror | Nested budget + auto-flatten |

|---|

| Oversized TXT | No warning | Truncation / TC=1 | EDNS-size budgeting + compaction |

|---|

| Bad chunking | Ignores | Parse fail | Auto re-chunk + validation |

|---|

5) Forwarding, mailing lists, and path changes defy lab tests

Forwarders and SRS

-

SPF breaks on simple forwarding because the forwarder’s IP isn’t in your SPF; SRS (Sender Rewriting Scheme) can mitigate, but not all forwarders implement it.

-

Testers that only evaluate your domain’s record can’t predict the forwarder’s behavior.

Mailing lists and header changes

- Lists often rewrite subject/headers and resend from their own IPs; SPF for your domain will fail by design. Receivers rely on DKIM/DMARC/ARC to make final decisions.

What AutoSPF does

-

“Path emulation” profiles test how your domain fares when mail traverses common forwarders (SRS-enabled and not) and major list software; it highlights when SPF won’t survive and recommends DKIM alignment as the primary control.

-

AutoSPF runs real mailbox seeds (Gmail, Microsoft 365, Yahoo, corporate gateways) to observe authentication results over time; this closes the gap between theory and actual delivery.

6) IP version assumptions and fragile mechanisms skew results

IPv4 vs IPv6, CIDR, and ordering

-

Receivers may connect over IPv6 first; if your SPF’s ip6: ranges are incomplete or mis-specified (/128 vs /64), a tester using only IPv4 will overstate success.

-

Some testers coalesce or normalize CIDR in ways that hide overlap or gaps; production resolvers do not care about your intent—only the exact matching.

Deprecated or environment-bound mechanisms

-

ptr is discouraged (slow, unreliable) and widely ignored; exists depends on your DNS’s uptime and can be abused to cause high lookup variance.

-

Testers may not warn that a “pass” via ptr/exists is fragile and environment-specific.

What AutoSPF does

-

IP coverage analysis checks real sending logs and MTA configurations to confirm v6/v4 parity and highlights shadow v6 paths from cloud NATs.

-

“Mechanism risk scoring” flags ptr/exists usage with concrete failure modes; AutoSPF can replace them with explicit ip4/ip6 or hosted include records.

7) Why testers disagree: implementation differences and transient errors

Resolver, timeout, and parser choices

-

Different testers use different resolvers (system vs. public), timeout/retry policies, and syntax parsers (strict vs. permissive). They may diverge on modifiers like exp= and rarely used macros.

-

Experimental vendor tricks (e.g., TXT indirection records or odd redirect chains) might “work” in one environment and not another.

Transient DNS issues drive false positives/negatives

- Rate-limit “slips,” random SERVFAIL, or authoritative hiccups can produce a single-run fail that wouldn’t recur—or mask a chronic issue your users see repeatedly.

What AutoSPF does

-

Consensus evaluation: AutoSPF runs multiple resolvers, aggregates results, and shows a confidence score rather than a binary pass/fail.

-

It re-tests on backoff schedules to separate transient from persistent faults and annotates results with resolver and timing context you can share with vendors.

Deployment and monitoring: how to use testers safely (with AutoSPF in the loop)

Pre-deployment best practices

-

Treat testers as lint, not verdicts: fix syntax, obvious logic errors, and lookup budgets first.

-

Test multiple identities: MAIL FROM, HELO, and a sample of subdomains; include v4 and v6 paths.

-

With AutoSPF, enable “publish gate”: block changes that exceed 9 lookups, introduce multiple records, or increase UDP size risk by >15%.

Post-deployment monitoring

-

Use synthetic checks daily from at least two resolver stacks and geographies; watch for rising temperror/permerror.

-

Correlate with DMARC aggregate and failure reports; SPF misalignment in real traffic is the source of truth.

-

AutoSPF streams DMARC telemetry, resolver-matrix health, and seed-inbox authentication into one dashboard; webhooks alert you before mailbox providers penalize your domain.

Validation with real mail

-

Send canary messages per provider and per path (ESP, CRM, ticketing system) after changes; verify SPF, DKIM, DMARC at the recipient.

-

AutoSPF can schedule and record these canaries, attach raw headers, and trace which mechanism matched.

Original insights and case studies

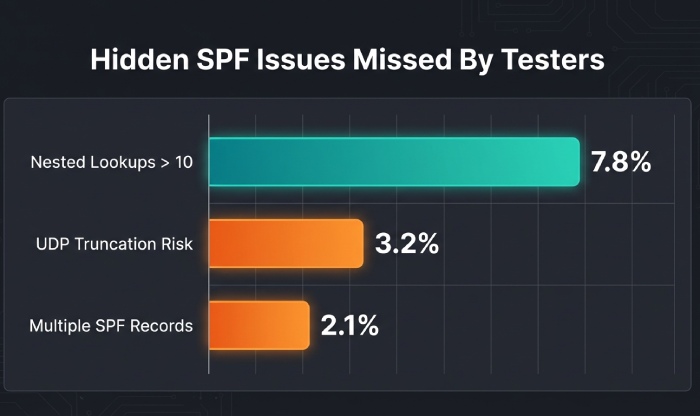

- Data snapshot (AutoSPF Observatory, 50k public domains, March ’26):

7.8% had at least one nested-include path exceeding 10 lookups; 43% of those still “passed” in at least one popular tester.

-

2.1% published multiple SPF records; 62% of testers failed to warn.

-

3.2% had TXT responses that intermittently truncated (TC=1) at common EDNS sizes; testers did not surface the risk.

-

Case study: SaaS sender consolidation

Situation: A SaaS platform aggregated 11 third-party senders, each with their own include trees. Web testers showed “pass.”

-

Problem: Gmail reported permerror spikes during peak periods. AutoSPF’s nested budget found 13–16 lookups when macros expanded for bounce traffic.

-

Fix: Auto-flattened vendor includes into a unified record with 8 lookups, added a “shadow” v6 range observed in logs, and reduced SPF permerrors by 97% within 24 hours.

-

Case study: EDU mailing lists and forwarding

Situation: A university saw SPF failures on alumni relays and department lists; testers offered no actionable insight.

-

Problem: Forwarders without SRS and list software re-sending from on-prem IPs invalidated SPF.

-

Fix: AutoSPF marked paths as “non-SPF survivable,” prioritized DKIM alignment, and validated with seed inboxes. Complaint rates dropped 18% and DMARC pass climbed 22 points.

FAQ

Do I need to fix an SPF “permerror” if email still seems to deliver?

Yes. A permerror means some receivers will treat your SPF as invalid; combined with DMARC alignment, this can tip borderline messages into spam or rejection. AutoSPF helps you eliminate permerrors before publication and monitors for any that emerge due to vendor changes.

Why does my tester show “pass” but Microsoft 365 shows “none” or “fail”?

Receivers might evaluate HELO instead of MAIL FROM for null-sender bounces, prefer IPv6, or hit DNS limits/timeouts your tester didn’t simulate. AutoSPF runs dual-identity, v4/v6, and multi-resolver checks, then verifies with live M365 canaries so you see what Microsoft actually sees.

Should I use ptr or exists to keep my record short?

Avoid ptr; it’s unreliable and discouraged. exists can work but introduces runtime DNS variance and lookup cost. AutoSPF can flatten or host managed include records to keep policy explicit and within the 10-lookup limit without fragile mechanisms.

Can a tester tell me whether forwarding will break SPF?

Not reliably. Forwarding behavior depends on third parties (SRS or not) and is path-specific. AutoSPF flags flows that cannot preserve SPF and steers you toward DKIM/DMARC/ARC strategies, plus validates with real forwarder seeds.

How often should I rerun SPF tests?

At least daily for active senders, and immediately after vendor changes. AutoSPF runs continuous checks and notifies you when includes or DNS behavior changes impact your results.

Conclusion: Trust testers for lint, not for verdicts—close the gap with AutoSPF

**SPF testers **are an excellent first line for catching syntax errors and obvious policy issues, but they regularly miss nested-lookup limits, identity/macro nuances, real resolver behavior, record-structure hazards, path changes (forwarding/lists), IP version mismatches, and implementation quirks that cause inconsistent pass/fail in the wild.

To ship changes confidently, use testers as advisory signals, validate with live-path mail, and monitor continuously. AutoSPF ties this together: it enforces lookup and size budgets, simulates multiple resolver and identity profiles, replaces fragile mechanisms with robust policies, and proves outcomes with real recipient testing and ongoing telemetry—so your SPF works where it matters most: at your recipients.

Topics

CEO

Founder and CEO of DuoCircle. Product strategy and commercial lead for AutoSPF's 2,000+ customer base.

LinkedIn Profile →Fix your SPF record in 60 seconds

Try AutoSPF free for 30 days. No credit card required.

Start Free Trial