AI-Powered Phishing in 2026: How Generative AI Changed the Attacker Economics of Email Why Email Authentication Is the Last Reliable Defense Signal in the Age of AI

Quick Answer

For twenty years, phishing had a craft problem. A convincing spear-phishing email required language fluency, cultural context, target research, and time, roughly 16 hours per quality campaign email by IBM’s pre-2024 estimates. The limiting factor was human labor. Sophisticated campaigns were expensive. That capped the attacker’s volume.

TL;DR

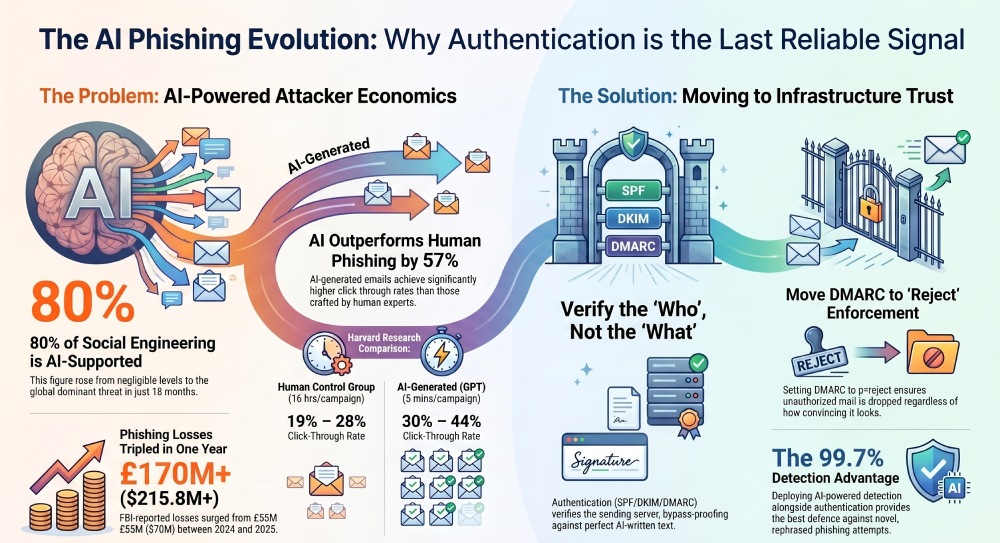

- 80%+ of social engineering is now AI-supported, up from negligible levels three years ago. Adversaries leverage jailbroken models, synthetic media, and model poisoning to enhance operational effectiveness (ENISA Threat Landscape 2025).

- 16% of data breaches already involve AI-powered attacks, with AI-generated phishing as the #1 AI attack type (37%) and deepfake impersonation as #2 (35%). Average phishing-initiated breach cost: $4.8 million (IBM Cost of a Data Breach 2025).

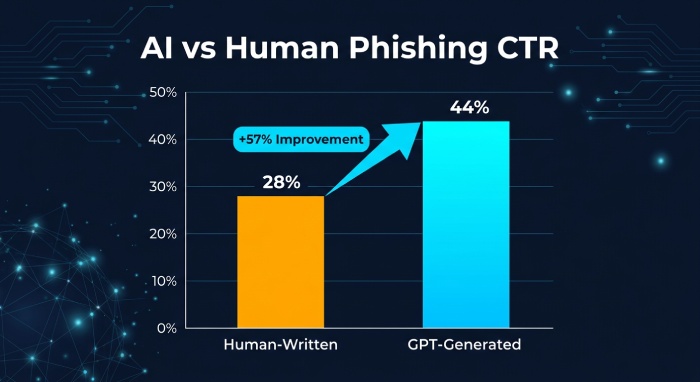

- GPT-generated phishing outperforms human-written phishing by 50-57% in click-through rates. In a Harvard-led controlled experiment, AI emails achieved 30-44% clicks vs 19-28% for human control (Heiding et al., Black Hat USA 2023).

- Novice attackers gain a 400% improvement in task completion and 57% reduction in attack time when assisted by GenAI compared to traditional internet resources (Multivector Phishing study).

- Current defenses are failing. LLM-rephrased phishing significantly evades Gmail, SpamAssassin, and Proofpoint, the three most common enterprise email filters (Next-Gen Phishing study). FBI phishing losses tripled in one year from $70M to $215.8M (FBI IC3 2025).

- Email authentication is the last machine-verifiable signal. Content-based detection fails against grammatically perfect AI text. SPF/DKIM/DMARC verify infrastructure, not content, making authentication MORE critical in the AI era, not less.

1. The economics shifted: what AI actually changed

For twenty years, phishing had a craft problem. A convincing spear-phishing email required language fluency, cultural context, target research, and time, roughly 16 hours per quality campaign email by IBM’s pre-2024 estimates. The limiting factor was human labor. Sophisticated campaigns were expensive. That capped the attacker’s volume.

Generative AI removed the cap. Not by making phishing possible, it was always possible, but by collapsing the cost structure. What required 16 hours of skilled human labor now takes 5 minutes of prompted AI output. The economics of the attack changed from a labor problem to an engineering problem, and engineering problems scale.

The institutional data confirms the shift is not theoretical. ENISA’s 2025 Threat Landscape report, analyzing 4,875 cybersecurity incidents across the July 2024 to June 2025 reporting period, found that AI-supported phishing campaigns represented more than 80 percent of observed social engineering activity worldwide. Adversaries are leveraging ‘jailbroken models, synthetic media and model poisoning techniques to enhance their operational effectiveness.’ Three years ago, that figure was negligible. The transition from experimental to dominant happened in approximately 18 months.

IBM’s Cost of a Data Breach Report 2025 quantifies the breach-side impact: 1 in 6 breaches (16%) now involve attackers using AI. Of those AI-involved breaches, 37% used AI-generated phishing content and 35% used deepfake impersonation. Phishing remains the overall #1 initial attack vector at 16% of all breaches, with an average cost of $4.8 million per incident. The AI layer is making the most expensive attack category even more effective.

IBM X-Force’s 2025 Threat Intelligence Index documents the offensive infrastructure evolving alongside the content: adversary-in-the-middle (AITM) phishing kits and custom AITM attack services are being sold on the dark web to bypass MFA defenses. Meanwhile, on the defensive side, only 24% of enterprise generative AI projects are properly secured, meaning 76% of organizations deploying AI have inadequate security controls for their own AI systems, let alone defenses against AI-generated attacks.

“AI-supported phishing campaigns reportedly represented more than 80 percent of observed social engineering activity worldwide, with adversaries leveraging jailbroken models, synthetic media and model poisoning techniques to enhance their operational effectiveness.”, ENISA Threat Landscape 2025 (PDF)

What it means: The shift from ‘AI could be used for phishing’ to ‘AI is used in 80%+ of phishing’ happened between 2023 and 2025. Any defensive posture that assumes human-crafted phishing as the baseline threat model is already obsolete. The economics permanently favor the attacker on the content-generation side. The defender’s advantage must come from elsewhere, and the only ‘elsewhere’ that doesn’t depend on analyzing AI-generated content is infrastructure verification: email authentication.

2. What the controlled experiments actually show

The institutional statistics tell you the scale of the shift. The academic experiments tell you the mechanism, and why traditional defenses fail against it.

Harvard / Black Hat USA: GPT phishing outperforms human phishing

Heiding et al., presented at Black Hat USA 2023, conducted a controlled experiment with 112 randomly selected participants. The design was rigorous: a control group received generic human-written phishing emails; treatment groups received GPT-generated phishing emails crafted with the same targeting parameters. The results were unambiguous.

The control group, human-written phishing, achieved a click-through rate between 19% and 28%. The GPT-generated emails achieved 30% to 44%. That is a 50-57% improvement in phishing effectiveness from AI-generated content, in a study designed at Harvard and presented at the world’s most selective cybersecurity conference. The AI didn’t just match human performance; it exceeded it by a margin that any marketer would consider transformative.

The novice-empowerment effect: 400% improvement, 57% time reduction

A separate study, Jailbreaking Generative AI: Multivector Phishing Threats, conducted controlled laboratory experiments with 30 participants, measuring what happens when novice attackers are given GenAI assistance versus traditional internet resources. The results quantify the democratization of attack capability:

- 240% increase in perceived phishing competence

- 400% improvement in task completion rates

- 57% reduction in implementation time

- 25% credential compromise rate achieved against security-aware participants using exclusively GenAI-generated content

The 25% compromise rate against security-aware participants is the number to internalize. These were not unsophisticated targets. They had security awareness training. One in four still submitted credentials to AI-generated phishing, because the content was grammatically perfect, contextually appropriate, and indistinguishable from legitimate institutional communication.

The 9,000-person university study: 11 months of real-world data

The most operationally relevant study is the Lateral Phishing with Large Language Models experiment, a pioneering 11-month campaign targeting a large tier-1 university’s entire workforce of approximately 9,000 individuals. Unlike lab studies, this tested LLM phishing in a real organizational environment with real email infrastructure and real users going about their daily work.

The researchers found that LLM-generated lateral phishing emails were ‘strikingly similar to traditional human-generated lateral spear phishing attacks.’ Approximately 10% of email recipients submitted their login credentials when targeted by LLM-crafted phishing, a rate consistent with well-crafted human spear-phishing campaigns, achieved at a fraction of the time and cost.

“Even inexperienced users can execute sophisticated phishing campaigns with current AI tools, emphasizing the urgent need for stronger cybersecurity measures and heightened user awareness in the age of AI.”

, Mishra et al., IIT Jammu (arXiv, 2025)

What it means: Three independent studies, from Harvard, a controlled laboratory, and a real-world 9,000-person deployment, converge on the same conclusion: AI-generated phishing is at least as effective as expert human phishing and dramatically more accessible to novice attackers. The quality floor of phishing has risen permanently. The grammar errors, awkward phrasing, and formatting tells that trained employees to spot phishing are gone. The defender can no longer rely on content analysis, by humans or by traditional filters, as the primary detection mechanism.

3. Why your current defenses are failing

The academic evidence does not stop at demonstrating that AI phishing is effective. It also demonstrates that current enterprise email filters are being systematically evaded.

Research evaluating Gmail’s spam filter, SpamAssassin, and Proofpoint, the three most widely deployed email security platforms in the world, found that LLM-rephrased phishing emails significantly reduced detection rates across all three platforms. The mechanism is straightforward: these filters were trained on patterns characteristic of human-written phishing (specific phrasing, structural patterns, known-bad templates). LLM-rephrased content generates novel phrasing that triggers none of the trained patterns while conveying the same malicious intent.

Researchers from Purdue University and Rochester Institute of Technology articulate the problem precisely: ‘Unlike earlier phishing attempts which are identifiable by grammatical errors, misspellings, incorrect phrasing, and inconsistent formatting, LLM generated emails are grammatically sound, contextually relevant, and linguistically natural. These advancements make phishing emails increasingly difficult to distinguish from legitimate ones, challenging traditional detection mechanisms.’

The threat is compounding. The SpearBot framework (arXiv, December 2024) demonstrates that LLMs can now autonomously optimize phishing content through generative-critique loops, generating an initial phishing draft, evaluating its deceptive quality using a critique model, then iterating to improve. The phishing isn’t just AI-generated; it is AI-refined. Each iteration produces more convincing content without human involvement.

And the threat is industrialized. Academic analysis of the malicious AI ecosystem documents the emergence of purpose-built tools, FraudGPT and WormGPT, designed specifically for social engineering with no ethical guardrails. These are not jailbroken versions of legitimate models; they are dedicated attack platforms, marketed on dark web forums for generating phishing content, creating fake identities, and crafting business email compromise campaigns. ENISA’s 2025 report further documents PhaaS (Phishing-as-a-Service) platforms like Darcula, impersonating over 200 organizations across 100+ countries, and Lucid, operating across 88 countries via iMessage and RCS.

What it means: The defensive paradigm that most enterprises operate under, content-based filtering plus user awareness training, was designed for a world where phishing had detectable content signatures. That world ended between 2023 and 2025. When Purdue/RIT researchers say AI phishing is ‘grammatically sound, contextually relevant, and linguistically natural,’ they are describing content that is indistinguishable from legitimate email by any content-analysis method, human or automated. The filtering paradigm needs a new anchor, and that anchor is infrastructure verification.

4. The financial damage: what the government data says

For anyone who evaluates security threats in dollar terms, the government data is unambiguous.

The FBI IC3’s 2025 Annual Report documents $20.877 billion in total cybercrime losses across 1,008,597 complaints, a 26% increase over 2024. Within that aggregate, the most relevant signal for the AI-phishing thesis is the trajectory of direct phishing and spoofing losses: $215.8 million in 2025, up from $70 million in 2024, a tripling in a single year. That tripling coincides precisely with the AI-phishing timeline documented by ENISA and IBM.

IBM’s Cost of a Data Breach 2025 documents the per-incident economics: $4.8 million average for a phishing-initiated breach. The US average breach cost hit an all-time record of $10.22 million, up 9% year-over-year even as the global average declined. Regulatory fines are a growing cost driver: 32% of breaches resulted in fines, with 48% of those fines exceeding $100,000.

Microsoft’s Digital Defense Report 2025, drawing from telemetry across 5 billion emails screened daily, confirms that 28% of breaches investigated by Microsoft’s Detection and Response Team were initiated through phishing or social engineering, the single largest attack vector. The convergence of IBM, FBI, Microsoft, and ENISA data on the same conclusion, phishing is the #1 vector, AI is accelerating it, and losses are compounding, leaves no room for the argument that this is a future risk. It is a current-quarter P&L exposure.

The deepfake dimension adds a layer that email filters cannot address at all. UC Berkeley’s California Management Review documents a specific case: a $25 million deepfake video-call fraud in Hong Kong (2024), where a finance worker authorized payment after a video call with a deepfake impersonation of the company’s CFO. ENISA’s Public Administration Threat Landscape predicts that ‘generative LLMs, voice-cloning and face-swap tools will be used for phishing, vishing attacks and misinformation/disinformation’ against government entities, a convergence of email, voice, and video impersonation.

“Direct phishing and spoofing losses tripled in a single year, from $70 million in 2024 to $215.8 million in 2025, the sharpest single-year increase in FBI IC3 history.”

, FBI IC3 2025 Internet Crime Report (PDF)

5. The defense that still works: why email authentication matters more, not less

Here is the insight that most AI-phishing coverage misses. The rise of AI-generated content does not make all defenses equally obsolete. It makes content-based defenses obsolete. Infrastructure-based defenses, email authentication, become more important, not less, because they are the only verification layer that doesn’t depend on analyzing text quality.

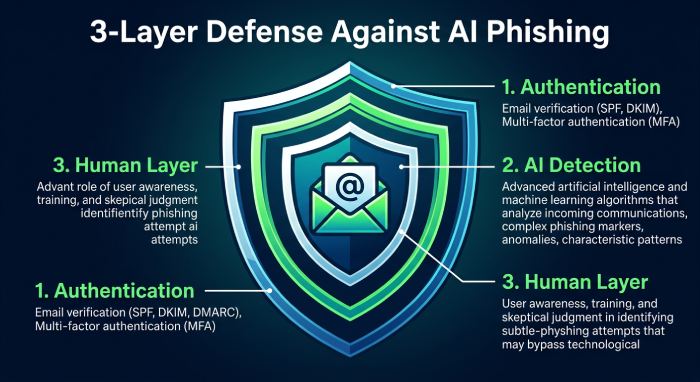

SPF verifies that the sending server’s IP address is authorized by the domain owner. DKIM verifies that the message has not been tampered with in transit and that it was signed by a key the domain owner published. DMARC ties SPF and DKIM together with domain alignment and tells receiving servers what to do when verification fails. None of these protocols examine the content of the email. They examine the infrastructure the email came from.

This is the critical distinction in the AI era. An AI-generated phishing email that is grammatically perfect, contextually appropriate, and indistinguishable from legitimate communication will pass every content-based filter. But if the email claims to be from your-bank.com and the sending server’s IP is not authorized in your-bank.com’s SPF record, or the message lacks a valid DKIM signature from your-bank.com, or the DMARC alignment fails, the email fails authentication regardless of how perfect the content is.

The academic evidence supports this framing. The ChatSpamDetector study demonstrated that GPT-4, deployed as a phishing detector rather than an attacker, achieved 99.70% accuracy, outperforming all other models and traditional baseline systems. AI can fight AI on the content-analysis front. But deploying AI-based detection requires deliberate investment, continuous model updates, and significant compute resources. Email authentication, by contrast, requires DNS records. The asymmetry favors authentication: it is cheaper, more deterministic, and independent of the arms race between AI attackers and AI defenders.

What it means: Authentication doesn’t replace content filtering, it complements it by providing a verification layer that AI cannot circumvent through better prose. In a world where AI-generated content is indistinguishable from legitimate content, the question ‘who sent this?’ becomes more important than the question ‘what does this say?’ SPF, DKIM, and DMARC answer ‘who sent this?’ at the infrastructure level. That is why authentication investment accelerates in the AI era rather than being rendered obsolete by it.

6. The action plan: defending against AI-powered phishing

The threat model has changed. The defensive playbook needs to change with it. The following steps are ordered by impact and urgency.

Layer 1, Make authentication the primary trust signal

- Move DMARC to enforcement (p=reject) on every active domain. At p=reject, receiving servers drop mail that fails domain alignment, regardless of how convincing the content is. This is the single highest-leverage defensive action against AI-generated domain spoofing.

- Flatten SPF to stay under the 10-lookup ceiling. The RFC 7208 constraint doesn’t care about AI threats, but PermError from excessive lookups breaks DMARC and lets spoofed mail through. Automate the refresh, vendor IP pools change weekly.

- Enable DKIM signing on every sending source with YOUR domain in d=. DKIM provides the cryptographic backstop when SPF fails. Configure DKIM with your domain as the signing domain, not the vendor’s, to ensure DMARC alignment.

- Authenticate parked domains. Publish v=spf1 -all and DMARC p=reject on every domain that doesn’t send email. AI-powered attackers specifically target unprotected domains for lookalike spoofing.

Layer 2, Deploy AI-augmented detection alongside authentication

- Evaluate AI-powered email security platforms that analyze behavioral signals, not just content. The ChatSpamDetector research shows GPT-4 can achieve 99.7% phishing detection, but only when deployed. Most enterprises still rely on rule-based filters trained on human-written phishing patterns.

- Implement BIMI (Brand Indicators for Message Identification) on enforced DMARC domains. BIMI displays your verified brand logo next to authenticated emails in supporting clients. In an AI-phishing environment, visual brand verification provides the trust signal that grammar quality can no longer provide.

- Monitor DMARC aggregate reports (rua=) with AI-enhanced analysis. DMARC reports reveal attempted impersonation at scale. AI-powered DMARC analysis can identify pattern shifts, such as sudden spikes in unauthorized sending from new geographies, faster than manual review.

Layer 3, Update the human layer

- Retrain security awareness programs for the AI era. The old playbook, ‘look for grammar errors, suspicious formatting, urgent tone’, fails against AI-generated content. Train employees to verify the sender’s domain and authentication status, not the quality of the prose.

- Implement out-of-band verification for financial transactions. The $25 million deepfake CFO case demonstrates that visual and voice impersonation are now viable. No financial transaction above a defined threshold should proceed on the basis of a single communication channel, email, phone, or video.

- Run AI-generated phishing simulations. The 9,000-person university study demonstrated a 10% credential-submission rate from LLM-crafted phishing. Your employees should encounter AI-generated phishing in training before they encounter it in production.

7. Bottom line: the last reliable signal

The AI-phishing data from 2023 to 2026 tells a story with a clear inflection point. In 2023, AI-generated phishing was an academic curiosity explored in controlled experiments. By 2025, 80%+ of social engineering was AI-supported, phishing losses tripled in a single year, and 16% of all data breaches involved AI-powered attacks. The transition from experimental to dominant took approximately 18 months.

Three separate controlled experiments, Harvard with 112 participants, a multivector lab study with 30 participants, and a real-world 9,000-person university deployment, converge on the same conclusion: AI-generated phishing matches or exceeds human phishing in effectiveness while dramatically reducing the attacker’s required skill and time investment. Purdue and RIT researchers describe the result precisely: ‘LLM generated emails are grammatically sound, contextually relevant, and linguistically natural.’ The traditional tells are gone.

Content-based detection, the paradigm that has anchored email security for two decades, was designed for a world where phishing had detectable content signatures. That world ended. Gmail, SpamAssassin, and Proofpoint are demonstrably evaded by LLM-rephrased content. The filter-evasion results are not marginal improvements; they are significant reductions in detection rates across the world’s most widely deployed email security platforms.

What remains is infrastructure verification. SPF, DKIM, and DMARC do not analyze content. They verify the sending infrastructure, IP authorization, cryptographic message integrity, and domain alignment. An AI-generated email with perfect prose but unauthorized infrastructure fails authentication just as reliably as a misspelled one. In a world where content quality can no longer distinguish attacker from sender, infrastructure verification is the last reliable machine-verifiable signal.

If you take one conclusion into your next security review, take this: AI made phishing content indistinguishable from legitimate email. The protocols that verify who sent the email, not what the email says, are the only defense layer that scales against the AI-generated threat. Every dollar spent on email authentication in 2026 is worth more than it was in 2023, because the problem authentication solves, verifying sender identity at the infrastructure level, is the one problem AI has not solved for the attacker.

References

Every source cited in this article is a downloadable PDF, IBM annual reports, ENISA government threat landscapes, FBI IC3 government data, Black Hat conference papers, arXiv academic preprints from Harvard, IIT Jammu, Purdue, RIT, and UC Berkeley, and Microsoft’s Digital Defense Report. No web articles or blog posts.

- IBM X-Force Threat Intelligence Index 2025 (PDF) https://www.bosconsulting.lt/reports/ibm-x-force-threat-intelligence-index-2025-report.pdf

- IBM Cost of a Data Breach Report 2025 (PDF) https://www.bakerdonelson.com/webfiles/Publications/20250822_Cost-of-a-Data-Breach-Report-2025.pdf

- ENISA Threat Landscape 2025 (PDF) https://www.enisa.europa.eu/sites/default/files/2025-11/ENISA%20Threat%20Landscape%202025.pdf

- ENISA Sectorial Threat Landscape: Public Administration 2024 (PDF) https://www.enisa.europa.eu/sites/default/files/2025-12/ENISA%20Public%20Administration%20TL%202024%20-%20v1.1.pdf

- Heiding et al., ‘Devising and Detecting Phishing’ (Black Hat USA 2023, PDF) https://i.blackhat.com/BH-US-23/Presentations/US-23-Heiding-Devicing-and-Detecting-Phishing-wp.pdf

- Mishra et al., ‘Jailbreaking Generative AI’ (IIT Jammu, arXiv PDF) https://arxiv.org/pdf/2503.01395

- Jailbreaking GenAI: Multivector Phishing Threats (arXiv PDF) https://arxiv.org/pdf/2507.12185

- Lateral Phishing with LLMs, Tier-1 University Study (arXiv PDF) https://arxiv.org/pdf/2401.09727

- SpearBot: LLM Generative-Critique Spear-Phishing (arXiv PDF) https://arxiv.org/pdf/2412.11109

- Next-Generation Phishing: LLM Agents Empower Attackers (arXiv PDF) https://arxiv.org/pdf/2411.13874

- Robust ML Detection of LLM-Generated Phishing (Purdue/RIT, arXiv PDF) https://arxiv.org/pdf/2510.11915

- ChatSpamDetector: LLMs for Phishing Detection (arXiv PDF) https://arxiv.org/pdf/2402.18093

- FBI IC3 2025 Internet Crime Report (PDF) https://www.ic3.gov/AnnualReport/Reports/2025_IC3Report.pdf

- Microsoft Digital Defense Report 2025, CISO Summary (PDF) https://cdn-dynmedia-1.microsoft.com/is/content/microsoftcorp/microsoft/bade/documents/products-and-services/en-us/security/CISO-Executive-Summary-MDDR-2025.pdf

- UC Berkeley CMR, ‘Data Breaches: Fight AI with AI’ (PDF) https://cmr.berkeley.edu/assets/documents/sample-articles/uddin-et-al-2025-data-breaches-fight-ai-with-ai.pdf

Decoding the Threat Landscape: ChatGPT, FraudGPT, WormGPT (arXiv PDF) https://arxiv.org/pdf/2310.05595

Topics

General Manager

Founder and General Manager of DuoCircle. Product strategy and commercial lead for AutoSPF's 2,000+ customer base.

LinkedIn Profile →